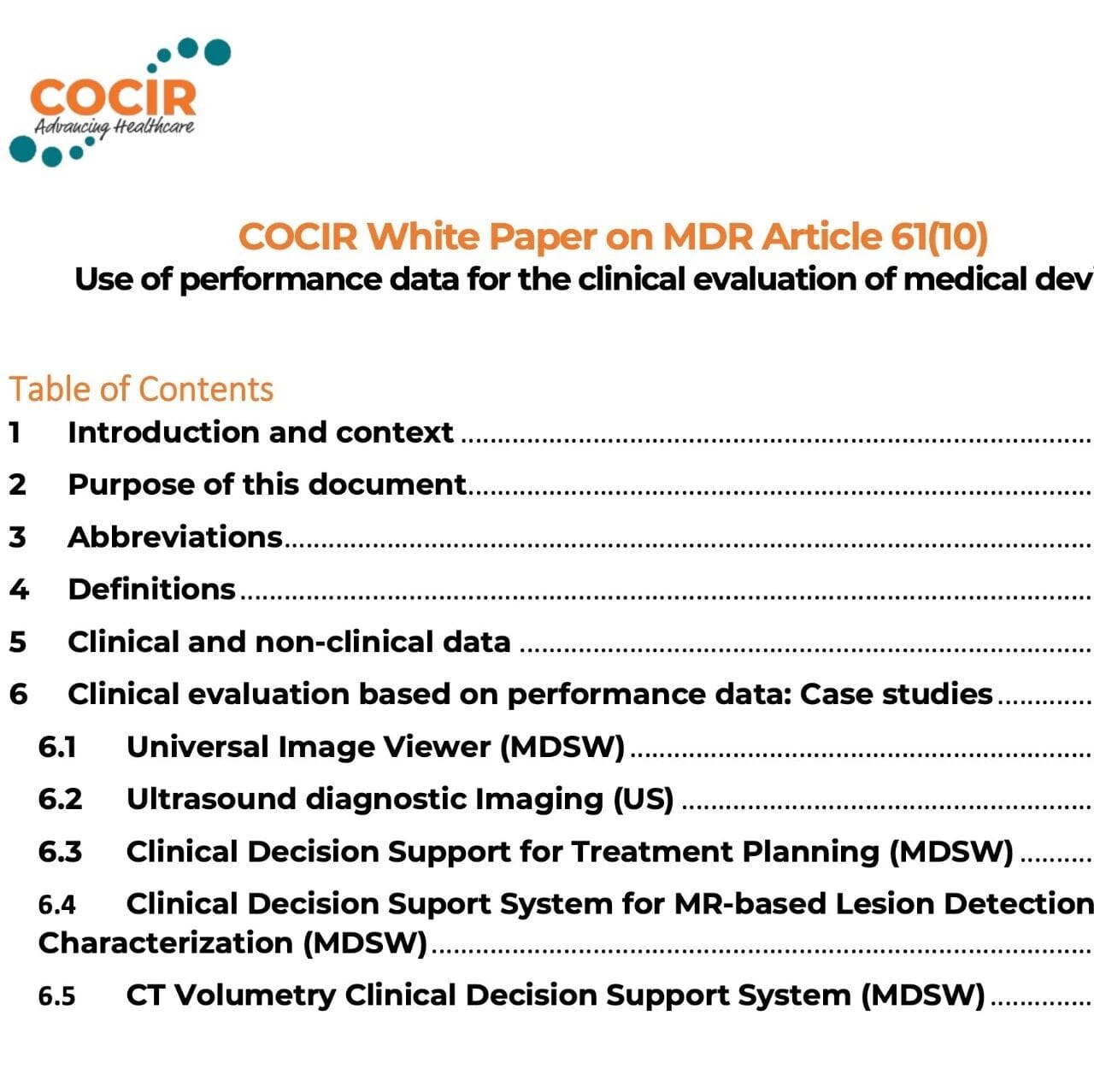

COCIR (European Coordination Committee of the Radiological, Electromedical and Healthcare IT Industry) is the industry association representing Europe’s radiological imaging, electromedical equipment, and healthcare IT sectors. It has long participated in technical and policy discussions related to EU medical device regulations, including the MDR. Its position papers and white papers are widely used by manufacturers, Notified Bodies, and regulators as references for understanding and addressing complex compliance issues, and therefore carry substantial industry influence and professional credibility.Before we begin: all conclusions and statements in this article are drawn strictly from the original COCIR white paper and the logic of the cases it presents. I am only providing a structured interpretation, from the perspective of a CRO focused on clinical and regulatory compliance services, of its regulatory implications, evidence-chain construction, and implementation actions, without introducing any additional external views or new facts.I. Background and Core Theme of This White Paper

The white paper starts from MDR Article 61(1) and 61(10): the general principle is to use clinical data to provide sufficient clinical evidence demonstrating that the device, under its intended purpose, conforms to the GSPR, that undesirable side effects have been evaluated, and that the benefit-risk ratio is acceptable; at the same time, Article 61(10) allows, in specific circumstances, the use of non-clinical data only to demonstrate conformity with the GSPR, provided that the manufacturer gives adequate justification based on the results of risk assessment, the benefit-risk profile, and consideration of the characteristics of the interaction between the device and the human body, the intended purpose, and the manufacturer’s claims.The white paper clearly states that there is uncertainty in practice regarding the interpretation and proper application of Article 61(10), particularly for low- to medium-risk devices (such as Class IIa) and medium- to higher-risk devices (such as Class IIb). These categories are not necessarily directly required by the regulation to conduct clinical investigations to demonstrate conformity with the GSPR. This uncertainty can lead to disputes among stakeholders, including manufacturers and Notified Bodies.Accordingly, the purpose of this white paper is not to replace all clinical evidence with non-clinical data, but rather to show, for a specific category of devices—particularly certain software and imaging-related devices—how scientifically valid non-clinical testing and, in specific situations, retrospective human data can serve as an effective pathway for clinical performance testing at both the initial CE marking and post-market stages, while emphasizing that long-term assumptions must be verified through PMCF.Important boundary: the white paper explicitly does not apply to Class III devices or implantables.II. Key Concepts: Clinical Data vs. Non-clinical Data, and the Role of Retrospective Human Data

Based on the definitions in MDR Article 2, the white paper explains clinical data as information concerning safety or performance that is generated from the use of a medical device, generally implying use of the device in humans (patients or healthy volunteers). Sources may include clinical investigations, PMCF studies and other PMCF data, post-market surveillance data, and reports of clinical experience (such as case studies and compassionate use). Such data may be generated by the manufacturer or drawn from public sources (scientific literature, vigilance databases, etc.), and may relate either to the device under evaluation or to a device for which equivalence has been demonstrated.By contrast, non-clinical data are safety/performance-related data that do not involve patients or healthy volunteers, such as engineering or laboratory testing, animal studies, biocompatibility testing, phantom studies, reader studies using synthetic patient data, software V&V, and simulated-use modeling.The white paper places particular emphasis on retrospective human data as a potentially primary body of evidence for certain devices. For example, when medical device software (MDSW) is used for image post-processing or clinical decision support, previously acquired diagnostic images, health records, registries, and similar sources generated in routine clinical practice may be used as controlled data pools to obtain accurate, reliable, and reproducible results.Typical uses of retrospective human data identified in the white paper include:- (a) Algorithm training and validation (development stage)

- (b) Retrospective cohort studies to assess long-term performance (PMCF)

- (c) Assessment of image quality, or correlation comparisons between outputs from new software and other devices with the same intended purpose

III. Article 61(10) Is Not "No Clinical Evidence"; It Means "A Different Evidence Form + Greater Scientific Rigor + PMCF as the Backstop"

From the perspective of a CRO supporting communication between manufacturers and Notified Bodies, the white paper’s implicit expectations for Article 61(10) can be broken down into three regulatory logics:1.) Shift in evidence form: scenarios in which clinical outcomes or patient benefits cannot be measured directly are translated into measurable surrogate indicators such as performance, image quality, and algorithm accuracy.2.) Higher scientific threshold: if non-clinical or retrospective data are to be relied on, the methodology, test-case design, and outputs must be shown to be scientifically valid and generalizable to the intended clinical use environment.3.) Post-market verification is indispensable: long-term routine-use assumptions derived from non-clinical data, clinical data from equivalent devices, or the device’s own clinical investigations must be verified through PMCF.The white paper also notes that technological development is expanding the testing environment—digital twins, curated databases, computer modeling, physical or digital phantoms, and synthetic patient generation may all become controlled and scientifically valid sources of non-clinical data. However, the key review points remain: (i) whether the methodology is scientifically valid; (ii) whether the results can be extrapolated to the intended clinical use; and (iii) whether the evidence is sufficient to cover all clinically relevant characteristics and manufacturer claims, thereby demonstrating conformity with the applicable GSPR.IV. The Common Structure Behind the Five Cases: Breaking Clinical Evaluation into "Intended Purpose - Benefit - Risk - Technical Verification - Performance Surrogates - PMCF Verification"

Using five cases, the white paper demonstrates a practical pathway for conducting clinical evaluation based on performance data, while clearly stating that these cases do not represent a complete clinical evaluation, which must still comply with the MDR and applicable guidance, such as MEDDEV 2.7/1 rev.4 and MDCG 2020-1 for software.From a CRO reusability perspective, all five cases share the same writing and justification framework:- Define the intended purpose and users clearly (who uses it, at what clinical stage, and what the output is)

- Define clinical benefit, which is often indirect benefit (supporting decision-making or workflow rather than directly delivering a treatment or diagnostic outcome)

- Contextualize risk: the most serious harms usually arise from incorrect or delayed decisions (misdiagnosis, delayed treatment, incorrect treatment, etc.)

- Establish technical performance first by completing V&V, usability, human factors engineering, and related activities against applicable standards

- Assess intended clinical performance using surrogate endpoints, such as image quality, segmentation/detection accuracy, ROC, and multi-reader multi-case studies

- Verify long-term effectiveness and stability in routine use through PMCF (including literature surveillance, complaint trending, site studies, and prospective/retrospective PMCF)

V. Deep Dive by Case: How the White Paper Turns Performance Data into Clinical Evidence

Case 1: Universal Image Viewer (MDSW)

Positioning:Used for reference and diagnostic viewing within enterprise imaging solutions, supporting multispecialty data aggregation and basic image operations/measurements; diagnostic responsibility remains with the trained physician.The white paper adopts a typical indirect formulation for "clinical benefit":As a workflow support tool, the benefit cannot be expressed through measurable patient-related outcomes; the clinical relevance of its output derives from image quality, an appropriate tool set (per standards), periodic verification of medical displays, predictable/accurate/reliable performance, and a UI that reduces fatigue and improves reading performance.Risk:The worst-case scenario is misdiagnosis, delayed diagnosis, or incorrect treatment, triggered by causes such as poor image quality, incomplete information/interoperability issues, data corruption/loss, wrong patient/examination selection, or inaccurate measurements. The expectation of relatively low risk depends on the product meeting end-user needs and functioning as intended by the manufacturer.Evidence strategy:Because direct measurement of clinical diagnostic accuracy and of impact on treatment/outcomes is very difficult, the white paper proposes using diagnostic image quality as a surrogate for clinical performance and assessing it through task-based observer studies (reader studies). The rationale is that the current literature in this field typically uses quantitative (physical attributes) plus qualitative (reader-study) image-quality assessment.Technical evidence and PMCF:Software V&V is completed in accordance with IEC 62304 and IEC 82304-1, and usability in accordance with IEC 62366-1; because the algorithm is deterministic, performance is not expected to change over the lifecycle; PMCF focuses on literature surveillance (changes in the clinical State of the Art) and complaint trends.Case 2: Ultrasound Diagnostic Imaging (US)

Positioning:Uses high-frequency sound waves to generate anatomical images and real-time motion and blood-flow information to support diagnostic decision-making by sonographers/ultrasound physicians. The technology has inherent limitations (for example, obesity and air barriers such as lung or bowel gas can affect imaging), and healthcare professionals remain ultimately responsible for determining whether image quality is sufficient to support the decision at that time.Clinical benefit:The white paper again defines this as indirect benefit—the device does not itself deliver a treatment or diagnostic outcome directly, but supports decision-making or assists other devices in achieving their intended purpose during minimally invasive procedures.Risk:It does not use ionizing radiation. Regarding potential biological effects of ultrasound energy (tissue heating, gas formation, etc.), the white paper cites the AIUM position that, since the 1950s, there have been no independently confirmed reports of adverse effects in humans in the absence of contrast agents; however, it still emphasizes that non-medical use, especially fetal ultrasound, should be avoided. In addition, insufficient image quality, measurement errors, or missing data may lead to incorrect or delayed decisions; coupling agents (ultrasound gel) may cause sensitization or have been associated with serious infections due to microbial contamination; the risks of contrast-enhanced ultrasound are governed by the applicable medicinal product labeling.Evidence strategy:Use image quality as a surrogate for clinical performance, and conduct QC/performance evaluation testing with phantoms. The white paper provides a detailed list of elements that can be evaluated using phantoms (uniformity, sensitivity, geometric accuracy, contrast/spatial resolution, display fidelity, Doppler and elastography, etc.), and distinguishes between absolute capability (repeat measurements against quantifiable standards) and relative capability (parallel comparison with devices representative of the current clinical State of the Art).Technology and PMCF:The paper cites multiple ultrasound-related IEC/ISO standards (safety, output reporting, stability, phantom methods, biocompatibility, usability, etc.); PMCF focuses primarily on literature surveillance and proactive complaint monitoring, taking into account signals and new risks within the same generic device group.Case 3: Clinical Decision Support Software for Treatment Planning (Clinical Decision Support for Treatment Planning, MDSW)

Positioning:A multimodal platform aggregates medical records, laboratory, and imaging information; it uses natural language processing to screen information and map it to cancer scoring indices/guidelines to provide treatment pathway recommendations; it does not make diagnostic decisions, and its outputs must be confirmed or rejected by the physician in the interface.Risks:Incorrect or delayed treatment decisions (due to erroneous or unclear inputs or outputs), or delays caused by system unavailability.The evidence strategy is divided into two parts:(1) NLP capability: in a standardized environment, test the generalization capability for semantic variants using a curated manual medical-record database and correlate results with expected values; then conduct a registry study using raw real-patient health data from a defined time window to evaluate data-mining quality (accuracy, reliability, truthfulness, precision), while also assessing output data rate, availability, confidentiality, integrity, and potential cybersecurity vulnerabilities across sites with different IT infrastructures. (2) Treatment pathways: in a simulated use environment, use an "artificial patient" dataset (including normal, abnormal, implausible/incomplete information), with readers of different experience levels performing a double-blind crossover read; correlate the outputs with the annotated results defined in the guidelines, and record time-to-result for both automated and manual decision-making.PMCF:Because this is an innovative technology, a specific PMCF methodology is used: representative sites are selected to reduce bias; the software records physicians' manual corrections to the outputs and time-to-result, and collects user experience as well as performance/safety issues through interface questionnaires after each use; the manufacturer monitors signals and performs trend analysis of complaints.Case 4: MR-based lesion detection & characterization decision support system (MR-based lesion detection & characterization, MDSW)

Positioning:It performs automated segmentation and quantitative feature extraction on contrast-enhanced MR images and flags suspected tumor lesions; it does not make diagnostic decisions, and its outputs must be confirmed or rejected by the physician.Evidence strategy:First, a 4D digital phantom (based on real CT/MR datasets) is used to build a controllable "realistic anatomical model," because comparable ground truth is lacking for in vivo quantitative reconstruction due to variability caused by tissue differences, perfusion dynamics, and other factors; the phantom simulates physiological differences such as brain-region size, age, and population group, and introduces artificial lesions of different sizes, shapes, and configurations, as well as different perfusion patterns, to evaluate segmentation and detection capability. Subsequently, in a retrospective reader study, radiologists read datasets from already diagnosed and histologically confirmed tumors with and without software assistance, and performance is compared using multi-reader multi-case ROC analysis; if the use of the software improves detection accuracy based on histology and follow-up, its effectiveness can be demonstrated.PMCF:A two-arm prospective PMCF study at reference sites is used to evaluate performance and safety in routine tumor scenarios (with endpoints including consistency, efficiency, accuracy, etc.); in addition, retrospective analysis of hospital-registry DICOM data is conducted at representative sites, comparing AI-assisted accuracy against follow-up history information.Case 5: CT Volumetry clinical decision support system (CT Volumetry CDS, MDSW)

Positioning:It performs 3D segmentation and volumetric analysis of CT images for tumor assessment/treatment monitoring, and can also be used for stroke and pulmonary disease; outputs are compared against a normative database and deviations are flagged, with support for longitudinal recording or retrospective re-analysis.Evidence strategy:First, a standardized dataset is generated through repeated scans of a physical 4D phantom to verify capability for lesion delineation and tissue discrimination in a controlled environment; then a digital phantom based on real imaging datasets is used to simulate anatomical variability and moving targets, including non-spherical lesions changing over time, in order to evaluate volumetric measurement accuracy and limit of detection through repeated measurements, and to analyze correlation with and predictive value against the normative database. Clinical sensitivity/specificity is then validated through a retrospective study using annotated DICOM datasets across multiple disease types, correlating software outputs with manual ROI reconstruction/contouring (standard of care). In addition, the white paper emphasizes that assessing clinical accuracy across different tissues and complex differential diagnoses requires standardized ground truth, which can be obtained by aggregating follow-up information and analyzing retrospective imaging databases; in prospective imaging studies, however, confirmation information is often not immediately available.PMCF:This is divided into two phases. The first phase is a two-arm prospective PMCF study at reference sites, comparing against standard of care without adding invasiveness or burden; the second phase is a multicenter prospective PMCF study, for example a randomized study comparing stroke outcomes (recurrence, functional outcome, death, survival, morbidity, etc.), as well as evaluating whether automated CT volumetry can replace the semi-automated standard of care for tumor staging.VI. Key takeaways: the 6 most useful conclusions from the white paper for a company's clinical evaluation pathway

- Article 61(10) allows conformity with the GSPR to be demonstrated using non-clinical data alone in specific circumstances, but this must be supported by adequate justification based on risk assessment, benefit-risk, the characteristics of human interaction, the intended purpose, and the manufacturer's claims.

- Non-clinical data are not merely "engineering tests done at will"; they should reflect a controlled, standardized, reproducible scientific design. Methods such as digital twins, phantoms, modeling, artificial patients, and curated databases are explicitly identified as potentially valid pathways.

- For many imaging and software devices, using "patient outcomes" directly as endpoints is often not feasible; therefore, the white paper presents image quality, algorithm accuracy, reader studies (including ROC), and time-to-result as mainstream surrogate endpoints.

- Retrospective human data are regarded as an important body of evidence in software, imaging post-processing, and clinical decision support scenarios, but their value comes from the accurate, reliable, and reproducible results enabled by a "controlled data pool," not because they are "more like clinical practice."

- The white paper repeatedly emphasizes extrapolation: the evidence must cover all clinically relevant characteristics and the manufacturer's claims, and it must be extrapolatable to the intended clinical use environment; otherwise, even a large volume of data will be insufficient to support the conclusion.

- Regardless of the form of evidence selected for the initial clinical evaluation, the long-term performance/safety assumptions derived from that evidence must be verified in PMCF and implemented through a combination of literature surveillance, complaint trending, site studies, and prospective/retrospective PMCF.

VII. Practical CRO recommendations (strictly extrapolated from the white paper's logic into "how to write it/how to do it")

The following are not new requirements, but a project execution checklist distilled from the "reviewable elements" repeatedly appearing across the white paper's case examples:- Write the intended purpose as a claim that can be covered by testing: what the output is, who uses it, and for which clinical decision or workflow step it is used.

- When the benefit is presented as an "indirect benefit," a verifiable performance surrogate must be provided at the same time (such as quantifiable metrics for image quality, detection accuracy, error reduction, time savings, etc.).

- Let hazard scenarios drive the evidence strategy: around worst-case situations such as misdiagnosis, delay, and incorrect treatment, define the critical performance characteristics and boundary conditions that must be covered by performance data.

- Design controlled testing: prioritize standardized protocols and controlled environments (phantoms, digital phantoms, modeling, artificial patients, curated databases), establish ground truth, and control bias.

- Use reader studies to connect "performance" to "clinical relevance": multi-reader multi-case design, double-blind methods, ROC, and comparison against standard of care/unaided reading are bridges repeatedly used in the white paper.

- Demonstrate extrapolation and generalizability: provide scenario coverage for semantic variants, different anatomical variations, different IT environments, etc., and clearly establish consistency with the intended clinical use environment.

- Write PMCF as the mechanism for validating long-term assumptions: literature surveillance + complaint trending + site studies + prospective/retrospective PMCF, forming a closed loop.

Conclusion: Turn Article 61(10) into an evidence chain that is communicable, reviewable, and reproducible

The most important message the white paper offers companies is this: when a device, especially an imaging or software device, serves clinically more as decision support/workflow support, the key to clinical evaluation is not to force pursuit of hard-to-quantify patient outcomes, but to build a reviewable chain of evidence: use controlled and scientifically valid performance data to cover the claims and risks, and use PMCF to verify long-term assumptions in practice. This pathway does not lower the compliance threshold; rather, it shifts the threshold from "whether a clinical trial is conducted" to "whether the methodology is scientifically sound and whether the evidence chain is closed-loop."The reason for wanting to share this COCIR white paper systematically is not theoretical discussion, but real regulatory practice experience.

In several past projects handled by the Clinsota team, the core logic and evidence-construction approach set out in this white paper served as the primary reference framework, combined with the product's own risk profile, intended purpose, and performance evidence, to support multiple rounds of in-depth communication between companies and reviewers,ultimately securing acceptance from a U.S. clinical reviewer for the MDR Article 61(10) pathway。

This strategy enabled companies to avoid the originally anticipated large-scale clinical trials without lowering compliance requirements, not only significantlysaving millions in clinical study costs, but also substantially shortening the time to market, thereby giving companies a critical window of time and capital.

Precisely because this pathway has already been validated as feasible and referenceable in real-world regulatory settings, we hope to systematically break down and share the core ideas and implementation logic of this white paper with more peers working in regulatory affairs and clinical evaluation, offering some ideas and points for reflection.

If neededCOCIRWhite PaperIf you need the PDF, please reply in the backend: COCIRGet the White Paper